Exploring partnering well with AI

- Leanne Holdsworth

- Dec 10, 2025

- 3 min read

Over the holidays, I properly switched off. I needed to.

I did, however, make one small exception — spending some time listening, reading, and thinking about AI and its impact on leadership and human work.

This isn’t a new area of interest for me, but over the break one longer-term question kept resurfacing - as AI becomes more capable over time, is there anything that will remain uniquely human in leadership? I am biased toward an answer - yes of course there is. But might I be wrong?

Like so much of the rapidly changing landscape in AI right now, there is disagreement amongst the experts about the long-term question of whether, eventually, everything becomes computable and therefore in leadership nothing is uniquely human.

Some believe that ultimately there will be nothing left that humans can do better than machines. Others argue that even if AI can simulate these qualities, something essential about being human in leadership will not be reducible to computation; for example lived experience, judgement, care, or meaning.

But the more I sit with it, the more I wonder whether this debate largely being played out by technologists, futurists, and AI commentators is actually the wrong place for leaders to spend their energy.

Because even if the first group is right, even if AI becomes better than humans at almost everything we currently call “leadership” it still doesn’t carry responsibility. It doesn’t live with the consequences of decisions or carry the ongoing human cost of decisions or repair trust when things fracture.

And it doesn’t answer to other humans when harm is done.

Maybe it is just me, but I wanted to share this relief. Many questions about the future of organisations feel unanswerable to me as I think about the impact of AI. With this question, we as leaders don’t need to get drawn into a philosophical argument about whether anything is uniquely human.

We don’t need to predict the future perfectly. And we don’t need to compete with machines on capability.

What we do need to get better at is different.

As AI absorbs more of the cognitive load analysis, planning, pattern recognition, optimisation, leadership shifts away from being about having the answers and towards being accountable for the impact.

That places a new weight on a set of capabilities we’ve often treated as “nice to have”:

judgement when there is no clear right answer

moral and ethical accountability when human cost is involved

relational trust

sense-making

the ability to hold tension rather than rush to certainty

and designing conditions where humans can thrive, not just perform.

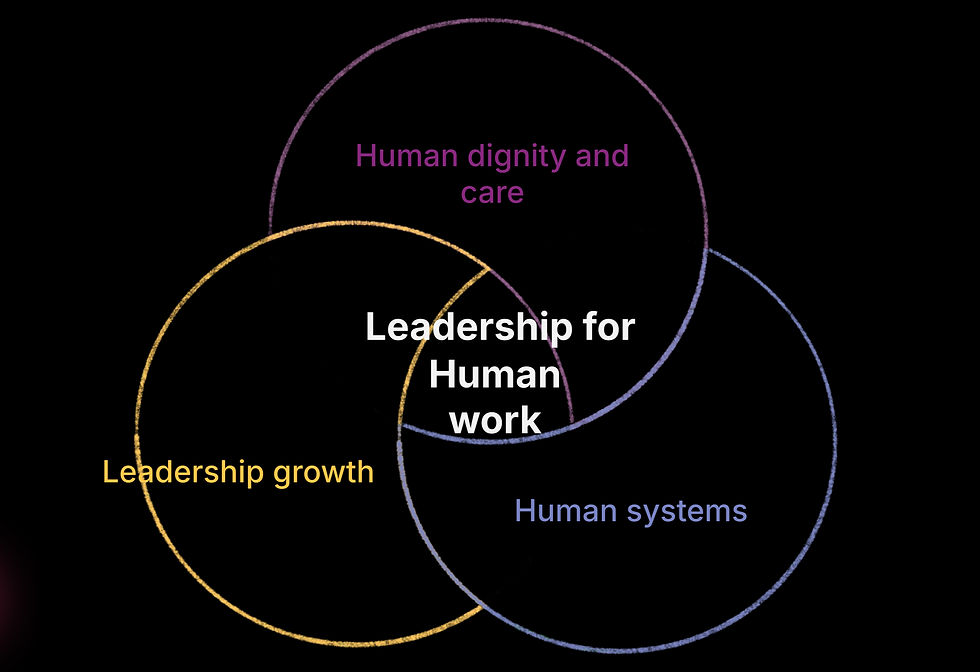

This is where I see Human Work offering a helpful lens.

In our work, we often talk about three shifts needed to create genuinely human workplaces; places where people can do good work without it costing them more than it should:

Human dignity and care: Accepting that work either erodes or upholds human dignity and that we create those conditions through our choices and how we treat each other. Taking responsibility, individually and collectively, for designing work that strengthens both human wellbeing and performance.

Human systems: Recognising that small choices create systems. Understanding that everyday decisions about pace, priorities, incentives, trade-offs, and how people are treated quietly shape culture over time.

Leadership growth: If I want to change things “out there”, I need to change things “in here”. Accepting that leadership impact is shaped not just by structures but by the inner assumptions, fears, habits, and reflexes leaders bring into the system.

Through that lens, AI doesn’t reduce the need for human leadership, it sharpens it.

As AI becomes more capable, leaders become less responsible for doing the work and more responsible for the conditions the work happens in, the systems those conditions create, and the human and organisational impact that follows.

The future of leadership isn’t about protecting what’s “uniquely human” from machines. It’s about taking responsibility for the human consequences of the systems we’re building.

Sitting with this over the holidays, quite literally slowing down enough to make things with my hands again, reminded me how much leadership is shaped by the questions we keep returning to.

In a world where AI will keep changing faster than we can predict, I’m less convinced that leaders need better answers. I’m more convinced they need better questions.

For me my attention is now less on “what will machines replace over the long term?” but “what are leaders responsible for today and into the future?” as we learn to partner with AI whilst enabling human workplaces.

Comments